Rethinking song creation Through AI Driven Composition Systems

There is a common assumption that music creation is inherently technical. That assumption has shaped everything from education to production workflows. But after spending time experimenting with tools like AI Music Generator, it becomes clear that the technical barrier may not be essential—it may simply be historical.

What we are seeing now is not just automation, but a redefinition of how music can originate. Instead of beginning with instruments or software, the process can begin with language itself.

Why Traditional Music Workflows Favor Specialists

Layered Complexity Slows Down Creation

Traditional production typically involves:

- Composing melodies

- Designing arrangements

- Recording performances

- Mixing and mastering

Each stage requires a different skill set. This creates specialization, but also fragmentation.

Creative Flow Is Often Interrupted

Because of these layers:

- Ideas must be paused and translated

- Momentum is frequently lost

- Execution becomes a bottleneck

The result is that many concepts never move beyond rough drafts.

How AI Systems Collapse The Workflow Stack

Unified Generation Instead Of Sequential Steps

Rather than separating tasks, the system combines:

- Composition

- Arrangement

- Performance

into a single generation step.

This does not eliminate complexity—it hides it behind abstraction.

Language As The Primary Interface

The most important shift is that:

- Inputs are descriptive, not technical

- Outputs are structured, not raw

This allows users to think in terms of meaning rather than mechanics.

Understanding The Internal Logic Of Generation

Mapping Intent To Musical Structure

Based on repeated tests, the system appears to interpret:

- Mood descriptors → harmonic frameworks

- Genre labels → instrumentation patterns

- Sentence rhythm → melodic phrasin

This mapping is not perfect, but it is consistent enough to be predictable.

Dynamic Arrangement Behavior

Tracks generated often show:

- Gradual build-ups

- Distinct section transitions

- Controlled intensity changes

This suggests that the system models temporal structure, not just sound textures.

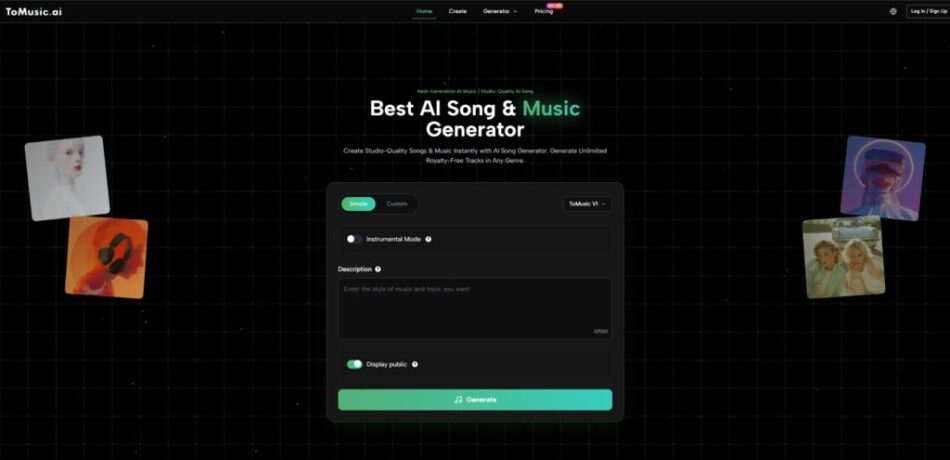

Using The System Step By Step

Step 1: Input Concept Or Lyrics

You can start with:

- A short prompt

- Or a full lyrical composition

The system treats both as structured input.

Step 2: Define Style And Mood

Parameters typically include:

- Genre selection

- Emotional tone

- Vocal inclusion

These guide the generation process.

Step 3: Generate And Iterate

The system outputs a full track. In most cases:

- Multiple generations are needed

- Small prompt adjustments lead to noticeable changes

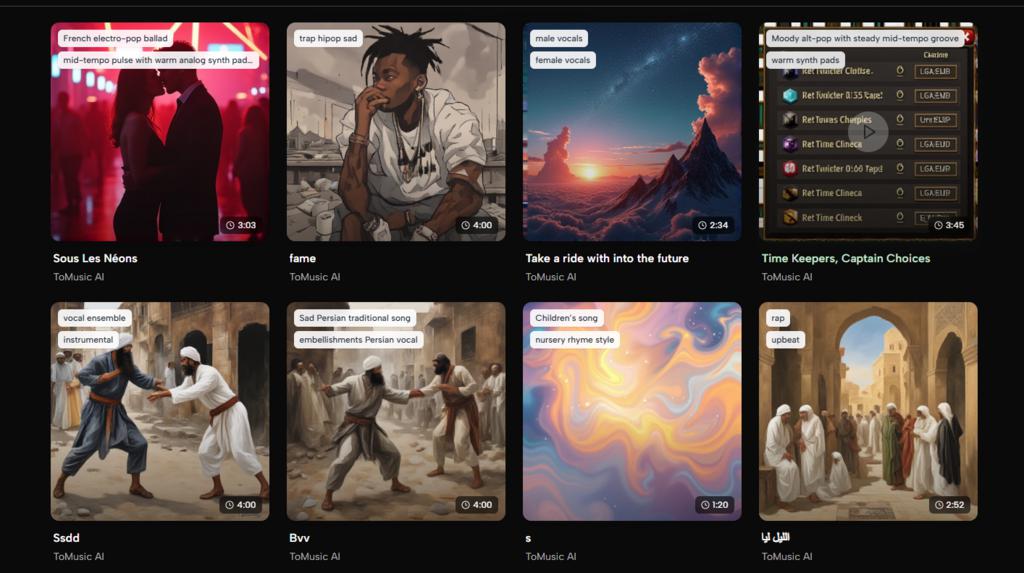

Comparing Creative Approaches Side By Side

Dimension | Conventional Production | AI Generation |

Starting Point | Instruments or DAW | Text or lyrics |

Skill Requirement | High | Moderate |

Time Investment | Significant | Minimal |

Iteration Cost | High | Low |

Output Consistency | Controlled | Variable |

This comparison highlights a trade-off: control versus accessibility.

Where Lyrics to Music AI Introduces A New Layer

When switching to Lyrics to Music AI, the experience changes from abstract generation to narrative-driven creation.

Instead of describing a feeling, you define a story. The system then:

- Aligns melody with lyrical phrasing

- Adjusts intensity based on textual emphasis

- Creates structural repetition where appropriate

In my observation, this often produces results that feel more intentional, even if they still require refinement.

Practical Scenarios Where This Approach Works Best

Fast Turnaround Media Production

For creators working under time constraints:

- Music can be generated on demand

- Styles can be adapted quickly

This reduces dependency on external resources.

Idea Exploration Without Commitment

For musicians:

- Concepts can be tested instantly

- Different directions can be explored without re-recording

This changes the role of production from execution to exploration.

Educational And Experimental Use

For beginners:

- The system provides immediate feedback

- Musical concepts can be understood through results

This lowers the entry barrier to learning.

Observed Limitations And Trade-Offs

Control Is Abstract, Not Precise

While parameters exist:

- Fine-grained adjustments are limited

- Results depend on interpretation rather than exact control

Output Quality Varies

Even with the same input:

- Some outputs feel cohesive

- Others require regeneration

Consistency is improving but not guaranteed.

Creative Identity Can Blur

Because generation is model-driven:

- Outputs may share stylistic similarities

- Distinctiveness depends on input creativity

Why This Signals A Broader Shift In Creation

The significance of systems like this is not just efficiency. It is the redefinition of creative entry points.

When music can be generated from language:

- The boundary between idea and execution becomes thinner

- The role of technical skill becomes less central

This does not diminish traditional production. Instead, it expands the ways in which music can be created.

What To Pay Attention To Moving Forward

From what I’ve seen, the most interesting developments will likely focus on:

- Better alignment between lyrics and vocal expression

- More consistent structural coherence

- Greater control over arrangement details

These improvements would not replace the current system—they would refine it into something more predictable and expressive.

A Different Way To Think About Making Music

Perhaps the most important takeaway is this:

Music creation no longer needs to begin with sound.

It can begin with language, intention, and narrative—and then be translated into sound through systems that understand both.

That shift does not simplify creativity. It changes where creativity happens.